- v.

- 転位する、置き換える、転置する

- 関

- dislocation、displacement、ectopia、migrate、migration、migratory、rearrangement、translocation、transposition、transpositional

WordNet

- transfer a quantity from one side of an equation to the other side reversing its sign, in order to maintain equality

- change key; "Can you transpose this fugue into G major?"

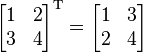

- a matrix formed by interchanging the rows and columns of a given matrix

- put (a piece of music) into another key

- any abnormal position of the organs of the body (同)heterotaxy

- the act of reversing the order or place of (同)reversal

- (electricity) a rearrangement of the relative positions of power lines in order to minimize the effects of mutual capacitance and inductance; "he wrote a textbook on the electrical effects of transposition"

- (mathematics) the transfer of a quantity from one side of an equation to the other along with a change of sign

- (music) playing in a different key from the key intended; moving the pitch of a piece of music upwards or downwards

- (genetics) a kind of mutation in which a chromosomal segment is transfered to a new position on the same or another chromosome

- a displacement of a part (especially a bone) from its normal position (as in the shoulder or the vertebral column)

- the act of disrupting an established order so it fails to continue; "the social dislocations resulting from government policies"; "his warning came after the breakdown of talks in London" (同)breakdown

- an event that results in a displacement or discontinuity (同)disruption

- (genetics) an exchange of chromosome parts; "translocations can result in serious congenital disorders"

- the transport of dissolved material within a plant

- the movement of persons from one country or locality to another

- (chemistry) the nonrandom movement of an atom or radical from one place to another within a molecule

- a group of people migrating together (especially in some given time period)

- the periodic passage of groups of animals (especially birds or fishes) from one region to another for feeding or breeding

- move periodically or seasonally; "birds migrate in the Winter"; "The workers migrate to where the crops need harvesting"

- move from one country or region to another and settle there; "Many Germans migrated to South America in the mid-19th century"; "This tribe transmigrated many times over the centuries" (同)transmigrate

- (chemistry) a reaction in which an elementary substance displaces and sets free a constituent element from a compound (同)displacement reaction

- (psychiatry) a defense mechanism that transfers affect or reaction from the original object to some more acceptable one

- to move something from its natural environment (同)deracination

- act of removing from office or employment

- changing an arrangement

- used of animals that move seasonally; "migratory birds"

PrepTutorEJDIC

- 〈位置・順序など〉‘を'逆にする,置き換える,移す / (楽曲で)…‘を'移調する / (等式で)…‘を'移項する

- 置き換え,転位 / (数学で)移項 / (音楽で)移調

- 位置を変えること / 脱臼(だっきゅう) / 混乱

- 〈U〉〈C〉移住,(動物の)移動 / 〈C〉《集合的に》(人・動物などの)移動の群

- (…から…へ)移住する《+『from』+『名』+『to』+『名』》 / (季節に応じ定期的に)〈鳥・動物が〉移動する,渡る

- とって代わること,置き換え / 解職,罷免 / 排水量

- 再整理,再配列

- (定期的に)移動する,移住する / 転々とする,放浪性の

Wikipedia preview

出典(authority):フリー百科事典『ウィキペディア(Wikipedia)』「2015/11/20 00:22:24」(JST)

wiki en

UpToDate Contents

全文を閲覧するには購読必要です。 To read the full text you will need to subscribe.

- 1. 直腸腟瘻、肛門腟瘻、および結腸膀胱瘻 rectovaginal anovaginal and colovesical fistulas

- 2. Z形成術 z plasty

- 3. 血液透析用の動静脈痩形成 creating an arteriovenous fistula for hemodialysis

English Journal

- Lipid-protein interactions: Lessons learned from stress.

- Battle AR1, Ridone P2, Bavi N3, Nakayama Y2, Nikolaev YA4, Martinac B5.

- Biochimica et biophysica acta.Biochim Biophys Acta.2015 Sep;1848(9):1744-56. doi: 10.1016/j.bbamem.2015.04.012. Epub 2015 Apr 25.

- Biological membranes are essential for normal function and regulation of cells, forming a physical barrier between extracellular and intracellular space and cellular compartments. These physical barriers are subject to mechanical stresses. As a consequence, nature has developed proteins that are abl

- PMID 25922225

- Focus on the legislative approach to short half life radioactive hospital waste releasing.

- Petrucci C1, Traino AC2.

- Physica medica : PM : an international journal devoted to the applications of physics to medicine and biology : official journal of the Italian Association of Biomedical Physics (AIFB).Phys Med.2015 Jun 19. pii: S1120-1797(15)00141-6. doi: 10.1016/j.ejmp.2015.06.001. [Epub ahead of print]

- PURPOSE: We propose to summarize the advancements introduced by the new Directive 2013/59/Euratom concerning the concept of clearance, for which the radioactive medical waste represents a typical candidate. We also intend to spotlight disputable points in the regulatory scheme in force in Italy, as

- PMID 26099431

- Benzothiadiazole Derivatives as Fluorescence Imaging Probes: Beyond Classical Scaffolds.

- Neto BA1, Carvalho PH1, Correa JR1.

- Accounts of chemical research.Acc Chem Res.2015 Jun 16;48(6):1560-9. doi: 10.1021/ar500468p. Epub 2015 May 15.

- This Account describes the origins, features, importance, and trends of the use of fluorescent small-molecule 2,1,3-benzothiadiazole (BTD) derivatives as a new class of bioprobes applied to bioimaging analyses of several (live and fixed) cell types. BTDs have been successfully used as probes for a p

- PMID 25978615

- The application of the European heat wave of 2003 to Korean cities to analyze impacts on heat-related mortality.

- Greene JS1, Kalkstein LS2, Kim KR3, Choi YJ3, Lee DG3.

- International journal of biometeorology.Int J Biometeorol.2015 Jun 16. [Epub ahead of print]

- The goal of this research is to transpose the unprecedented 2003 European excessive heat event to six Korean cities and to develop meteorological analogs for each. Since this heat episode is not a model but an actual event, we can use a plausible analog to assess the risk of increasing heat on these

- PMID 26076864

Japanese Journal

- Moe版一般化積型反復法の並列性能評価

- 藤野 清次,岩里 洸介,MoeThuThu

- 情報処理学会研究報告. [ハイパフォーマンスコンピューティング] 2014-HPC-143(20), 1-6, 2014-02-24

- 本論文では,分散並列環境下での反復解法の同期回数の削減という立場から従来の反復解法の効率について議論したい.ここで取り上げる反復法は,元の GPBiCG 法,GPBiCG_AR 法,そして,今回考案した GPBiCG_AR_β 法,GPBiCG_AR_β 法の合計 4 種類である.そして,これらの解法の反復 1 回当りの同期回数に着目し,それらの回数を減らすことに成功した.数値実験によって,これら …

- NAID 110009675734

- Transpose-AMIPの応用による気候システムの速い応答プロセスの解明

- 釜江 陽一,渡部 雅浩

- 週間及び1か月予報における顕著現象の予測可能性, 179-182, 2013-03

- 平成24年度京都大学防災研究所一般研究集会(24K-08)「週間及び1か月予報における顕著現象の予測可能性」, 京都大学防災研究所連携研究棟大セミナー室, 2012/11/20-22

- NAID 120005244482

- Computation-Communication Overlap Techniques for Parallel Spectral Calculations in Gyrokinetic Vlasov Simulations

- MAEYAMA Shinya,WATANABE Tomohiko,IDOMURA Yasuhiro,NAKATA Motoki,NUNAMI Masanori,ISHIZAWA Akihiro

- Plasma and Fusion Research 8(0), 1403150-1403150, 2013

- … A key numerical technique is the parallel Fast Fourier Transform (FFT) required for parallel spectral calculations, where masking of the cost of inter-node transpose communications is essential to improve strong scaling. … To mask communication costs, computation-communication overlap techniques are applied for FFTs and transpose with the help of the hybrid parallelization of message passing interface and open multi-processing. …

- NAID 130003392944

- Breaking the performance bottleneck of sparse matrix-vector multiplication on SIMD processors

- Zhang Kai,Chen Shuming,Wang Yaohua,Wan Jianghua

- IEICE Electronics Express 10(9), 20130147-20130147, 2013

- The low utilization of SIMD units and memory bandwidth is the main performance bottleneck on SIMD processors for sparse matrix-vector multiplication (SpMV), which is one of the most important kernels …

- NAID 130003365022

Related Links

- TRANSPOSE関数の使い方 TRANSPOSE関数は指定した配列の行と列の変換を行えます。 ... A3に =TRANSPOSE(A1:C1)を入力しA2:A4を選択後、配列数式(Ctrl+Shift押しながらEnter)として入力すると以下の表の結果となります。

- 行と列を入れ替える topへ トランスポーズ =TRANSPOSE(配列) 数式を配列数式として入力する必要があります:[Ctrl]キーと[Shift]キーを押したまま[Enter]で入力を確定します。 問題 下図のようなデータ表があります。 B3:E9の ...

- 操作手順:TRANSPOSE関数で行と列を入れ替える ※A1:C2の2行3列の表から、行列を入れ替えA11:B13セルに3行2列の表を作成する例 A11:B13セルを選択 ↓ 「=TRANSPOSE(A1:C2)」と入力 ↓ [Ctrl]キー+[Shift]キー ...

Related Pictures

★リンクテーブル★

| リンク元 | 「migration」「rearrangement」「migrate」「transpositional」「ectopia」 |

「migration」

- n.

- 関

- chain migration、destination、dislocation、ectopia、electrophoresis、electrophoretic、emigration、immigration、implantation、import、ingression、migrate、migratory、rearrangement、transfect、transfection、translocation、transpose、transposition、transpositional、wander、wandering

「rearrangement」

- n.

- 関

- dislocation、ectopia、migrate、migration、migratory、rearrange、reconstitute、reconstitution、reform、reformation、reorganization、reorganize、translocation、transpose、transposition、transpositional

「migrate」

- v.

- 遊走する、移動する、転位する

- 関

- dislocation、ectopia、migration、migratory、move、movement、rearrangement、run、shift、transfer、translocate、translocation、transpose、transposition、transpositional、travel、wander、wandering

「transpositional」

- adj.

- 転位の、転位性の

- 関

- dislocation、ectopia、migrate、migration、migratory、rearrangement、translocation、translocational、translocationally、transposable、transpose、transposition

「ectopia」

- n.

- 関

- aberrant、dislocation、dystopia、heterotropic、migrate、migration、migratory、rearrangement、translocation、transpose、transposition、transpositional